Barriers to Accessing Mental Health Services Today

On a typical week, the schedule looks full, the phones keep ringing, and the waitlist still grows. People who could have stayed engaged drop off after a missed callback, a confusing portal step, or a long gap before the first visit.

Capacity is the obvious constraint, but access breaks earlier than that. Intake forms pile up, referral notes arrive incomplete, and triage decisions get forced into short slots. Language needs and low health literacy slow everything down, and every handoff creates another chance for a lost follow-up.

Even when you add hours, the hidden work—documentation, outreach, rescheduling, and between-visit check-ins—soaks up staff time. That’s why the practical question isn’t “more clinicians or not,” but which choke points can be safely tightened with support tools before you pilot anything.

AI-Powered Screening and Early Detection

Those choke points often show up at the front door, when you’re trying to decide who needs help now, who can wait, and who can be routed to the right level of care without three rounds of phone tag. In practice, that means screening and triage: collecting consistent symptoms, risk flags, and basic history before a clinician ever opens the chart.

AI can help here by turning messy inputs—free-text referral notes, short questionnaires, portal messages—into a structured summary with suggested next steps, like “schedule urgent intake,” “offer skills group,” or “needs language support.” If your team gets 50 referrals on Monday, that kind of sorting can cut the time spent re-reading the same details and chasing missing fields.

Plan for false alarms and missed signals, set a clear human review step for any self-harm or violence flags, and make sure the tool can’t silently downgrade urgency because the patient wrote in plain, non-clinical language.

Chatbots and Virtual Mental Health Assistants

That handoff is where many programs stall: the screen says “urgent,” but the patient still needs to answer basic questions, pick a time, and stay engaged until a clinician is available. Chatbots and virtual assistants can cover that gap by handling the repetitive, high-volume work—confirming contact details, collecting missing intake fields, offering plain-language explanations, and pushing scheduling links without a staff member making three callbacks.

Used well, they also support between visits. A bot can send brief check-ins, reinforce a care plan (“Did you try the grounding exercise?”), and route replies to the right queue. If a patient writes “I can’t do this anymore,” the assistant should trigger a crisis pathway immediately, not “helpful tips.” That means scripted escalation rules, 24/7 coverage expectations, and an auditable handoff to a human.

These tools can drift into advice-giving, store sensitive text, or miss nuance in slang or another language. Start with narrow tasks, require clear consent language, and treat every vendor claim as a workflow test, not a demo.

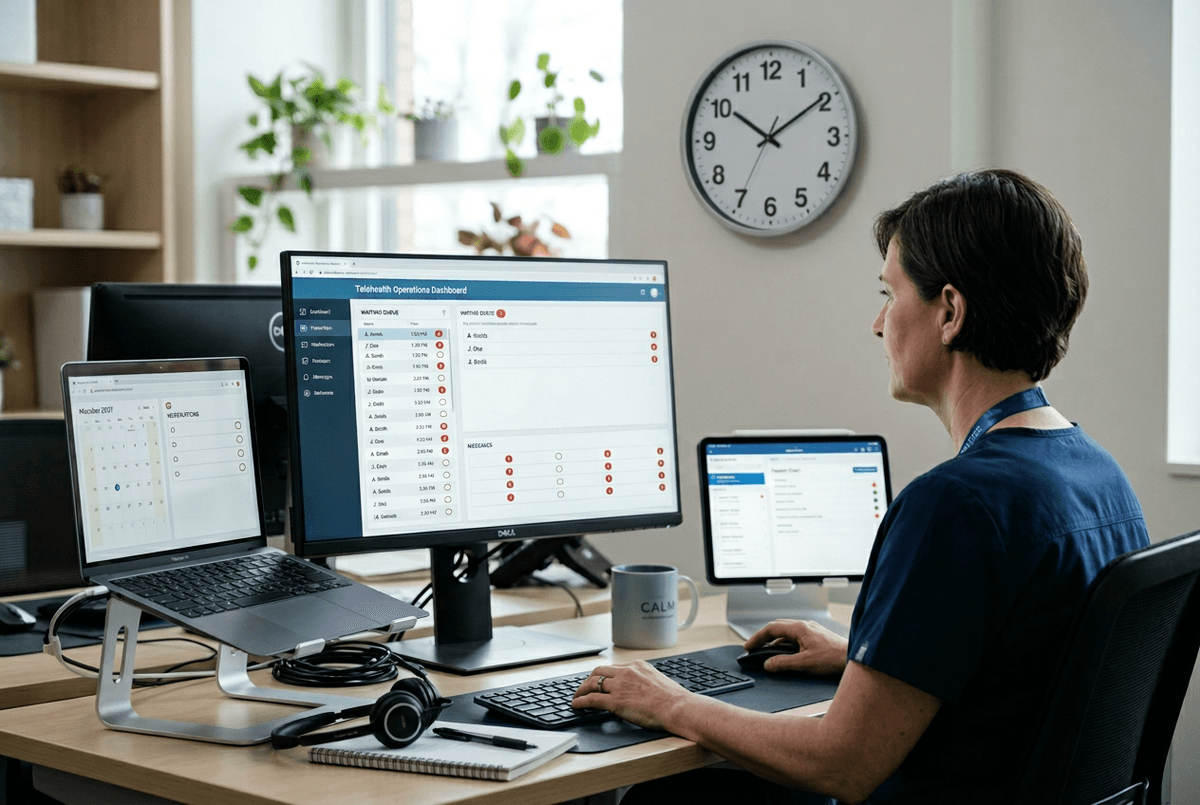

Expanding Reach Through Telehealth and Digital Platforms

Workflow tests quickly expose a simple truth: even a well-contained assistant can’t help much if the “next step” still requires a patient to travel across town, take time off work, or wait for a paper referral to get scanned. Telehealth and digital platforms expand reach when they remove those physical and administrative gates—same-week video intakes, asynchronous message-based follow-ups, and self-scheduling that actually shows the next available slot.

AI fits here as the glue between tools your team already uses. It can match appointment types to the right clinician template, suggest the shortest path to care (“group first, then individual”), and translate key instructions into plain language so patients don’t bounce at the portal. In employer or clinic programs with multiple vendors, AI-driven routing can reduce misdirected referrals by asking a few targeted questions before sending someone to EAP, outpatient therapy, or crisis services.

Telehealth breaks when broadband is weak, when “anytime messaging” turns into an unmanaged inbox, or when state licensure and consent rules don’t match your coverage area. Build staffing rules for response times, document the crisis pathway for off-hours messages, and make identity, location, and privacy checks part of the digital front door.

Personalization of Care Using Data and Machine Learning

Once identity, location, and privacy checks are part of the digital front door, the next familiar problem shows up: two people book the same “intake” slot, but one needs language support and the other needs a medication consult, and both get routed through the same steps. Personalization is what stops that one-size workflow from wasting the first week of care.

With basic data you already collect—reason for visit, prior diagnoses, preferred language, work schedule, missed-visit history—machine-learning tools can suggest the right pathway: group vs. individual, shorter check-in cadence for higher no-show risk, or extra prep materials for low health literacy. In practice, that can mean a tailored reminder sequence, a clinician template that pulls the key history into the note, and automatic prompts for measurement-based follow-ups at the right interval.

If your records under-capture certain communities, the model will “personalize” by offering them fewer options. Start with rules you can explain, audit outcomes by subgroup, and require a human override that’s easy to use.

Cost Reduction and Scalability of Mental Health Support

If you’re trying to cut waitlists, the first budget conversation usually lands on the same question: “How many more clinicians can we afford?” Then the math doesn’t work, because the real load is the small tasks that stack up—intake clean-up, reminders, reschedules, benefit checks, and routing messages to the right queue.

AI reduces cost when it lets one staff member safely handle more volume without lowering the bar. Auto-drafting visit summaries for clinician review, translating standard instructions, and sorting incoming messages by intent (med refill, scheduling, symptom change) can keep licensed time focused on care. At scale, the biggest win is consistency: fewer dropped follow-ups because every patient gets the same next-step prompts and escalations.

If outputs aren’t auditable, staff will double-check everything, and you’ll lose the time you hoped to save. Price pilots around hard metrics—minutes saved per referral, no-show rate, time-to-first-visit—and require a clean human handoff for anything that looks like risk.

Challenges, Ethics, and the Future of AI in Mental Health Access

That “clean human handoff” is where ethics and operations meet. If your escalation path depends on a single inbox, an on-call phone that isn’t staffed, or a vendor SLA you can’t enforce, the tool will surface risk without getting help to the person. Build the pathway first: who reviews flags, how fast, what happens after hours, and what gets documented.

Privacy and liability are the other hard edges. Require minimum-necessary data, clear retention rules, and a way to export logs for audits and incident review. Ask vendors how they handle model updates, bias checks, and downtime, and insist you can turn features off.

The future looks like tighter, narrower automation with stronger oversight. Treat AI as capacity, not care.