When leadership says “nice to have,” what are they really worried about?

When you bring up a workplace mental health program, leaders often don’t argue that stress isn’t real. They hesitate because they can’t see a clean line from “support” to a number they can defend in a budget meeting.

“Nice to have” usually means three worries: it won’t be used enough to matter, it will turn into an open-ended expense, or it will create expectations you can’t meet (like faster access to care than your benefits network can deliver). They also worry you’ll measure feelings instead of outcomes, then come back next quarter asking for more.

The way through is to treat this like risk management with receipts: define what’s happening now, what it costs, and what will change that finance can verify.

Start with a story finance can’t ignore: what’s the cost of doing nothing here?

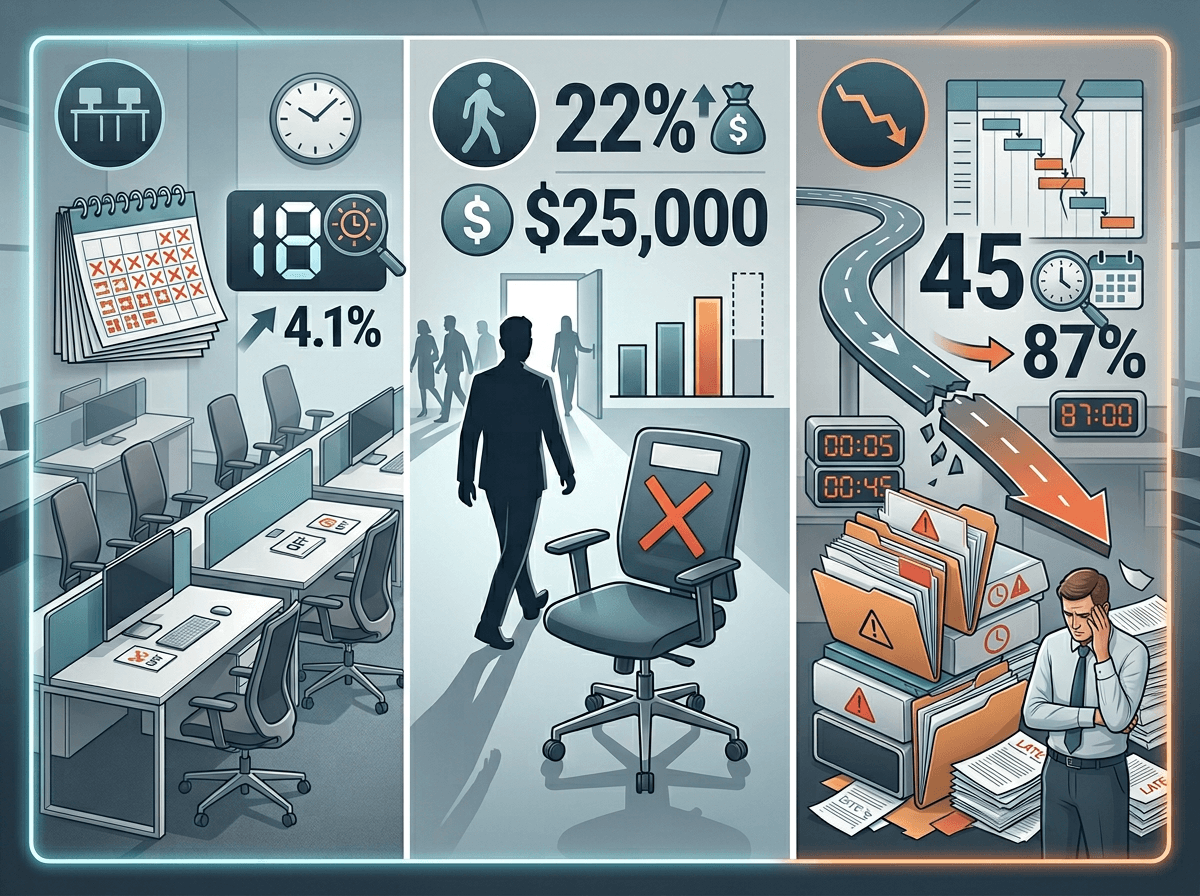

That “what’s happening now” becomes real when you describe a normal month: a key manager calls out for three days, then comes back but moves at half speed for two weeks; two high performers resign within a quarter; your team scrambles, deadlines slip, and someone else burns out filling the gaps. Finance doesn’t need a diagnosis to recognize the pattern—they need the bill.

Start with a simple cost-of-doing-nothing snapshot using numbers you already have. Pick one or two visible levers: absence, turnover, and delayed work. If one employee averages 2 extra mental-health-related days off per quarter, multiply by loaded daily cost (wages plus taxes and benefits) and then by headcount in the affected groups. For turnover, use your internal replacement cost (recruiting fees, time-to-fill, ramp time) and apply it to “avoidable exits” you can reasonably argue.

Keep it conservative, and write down what you are not counting—like manager time and downstream errors—so your estimate looks restrained, not inflated.

Which outcomes will you be held accountable for in 6–12 months?

Once you’ve shown a conservative “do nothing” bill, the question shifts to what you’ll be judged on by the next budget cycle. In practice, nobody expects you to prove that stress disappeared. They will ask whether business indicators moved in the groups that were hurting.

Pick two primary outcomes you can influence and finance can verify in 6–12 months. For many mid-sized companies, that’s (1) avoidable turnover in a few high-risk roles or teams, and (2) absence patterns that show up in payroll or HRIS (unscheduled days off, short-term leave starts, or repeat callouts). Add one secondary outcome that signals early traction, like manager-reported capacity to handle performance issues faster, measured by a short monthly pulse in manager cohorts.

Be realistic about what you can’t own. Claims cost and clinical outcomes usually lag and sit outside HR’s control. If you promise them anyway, leadership will treat the whole plan like a guess.

Your options aren’t ‘do nothing’ vs ‘big program’—what’s right-sized for your risk?

Those outcomes you pick force a practical question: where is the risk concentrated, and how much coverage do you actually need to move the numbers by the next cycle? If turnover is spiking in two functions, a company-wide “wellness overhaul” is more than you can defend. If unscheduled absence is broad-based, a tiny perk won’t show up in payroll.

Lay out three right-sized options that match the risk you’ve already priced. Option A: tighten what you already pay for—EAP relaunch, manager referral paths, and a short “how to use benefits” push in the highest-risk teams. Option B: add a targeted layer—manager training for those teams plus fast access to a few sessions (via a vendor or a narrow network add-on). Option C: broader coverage—company-wide training and expanded access, with extra support for managers.

Each option should name what it does not do. For example, training without access to care can frustrate employees, and added sessions without manager behavior change can still leave hot spots. The next step is to put a price on each option in a way finance recognizes.

How to price it without getting laughed out of the room

That price needs to look like something finance has seen before: a fixed annual amount, a per-employee-per-month rate, or a capped pilot budget. If you can’t state a ceiling on day one, it will read like “open-ended demand,” even if the intent is support.

Put each option on one line with the same cost parts: vendor fees (or network add-on), expected utilization assumptions, internal time, and any one-time setup. Example: “Option B: $X PEPM for 6 sessions/employee/year, capped at Y% utilization, plus $Z for manager training.” If you don’t have usage data, anchor to conservative ranges (like 3–8% in the first quarter after a relaunch) and show what happens at the high end.

Comms, manager time, and HR ops work still hit capacity. Price that time, even roughly, then set up the next question: what level of change would make that spend worth keeping?

Make the ROI believable: conservative ranges, not miracles

That “worth keeping” question is where ROI usually breaks—because people jump from spend to a single shiny payoff number. Finance will trust you more if you show a range tied to the two outcomes you already chose, with math they can audit.

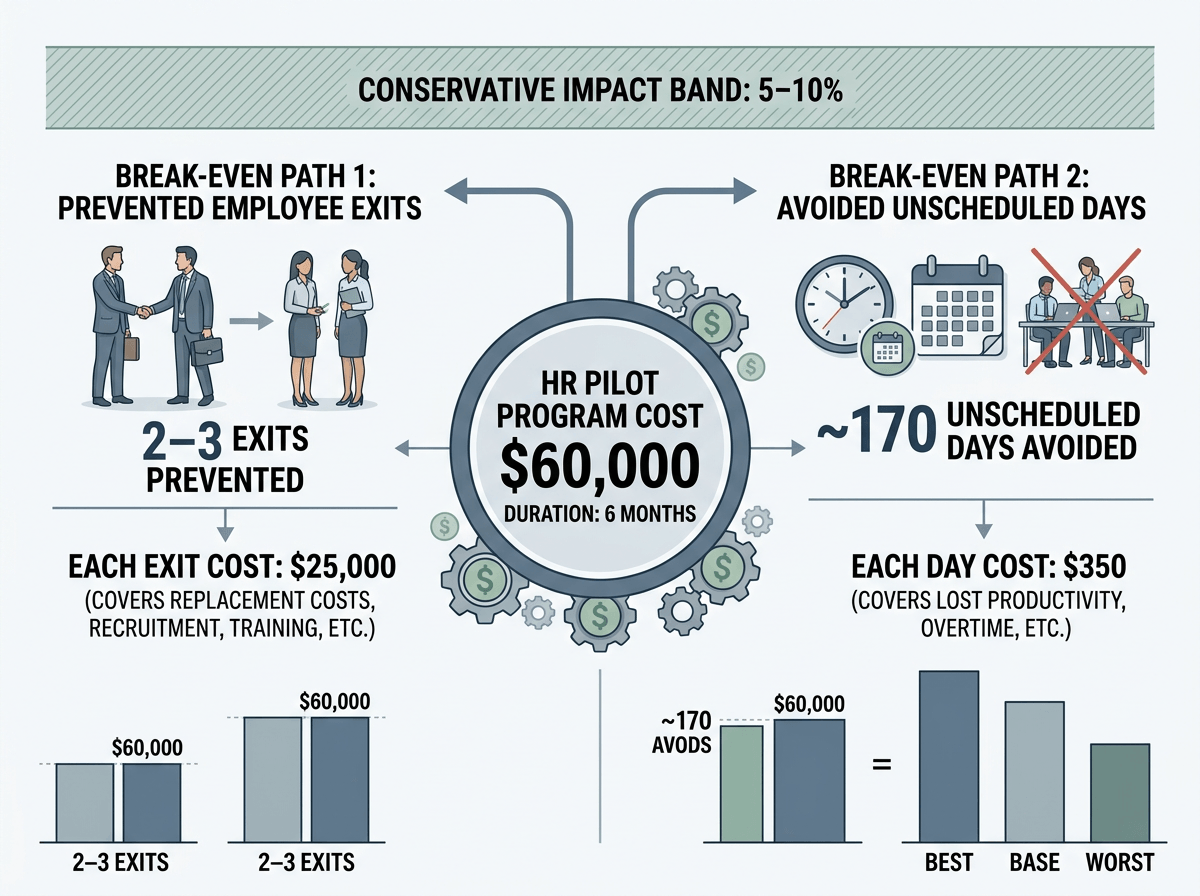

Start by converting your program cost into a break-even target. If the pilot costs $60,000 for six months, what does it take to get $60,000 back through fewer exits or fewer unscheduled days? Example: if one avoidable exit costs $25,000 to replace, break-even is 2–3 exits prevented; if an unscheduled day costs $350 loaded, break-even is ~170 days avoided. Then offer a conservative impact band (say 5–10% improvement in the targeted group), and show best/base/worst cases.

Call out what can make results look “bad” even if the program works: slow rollout, manager nonparticipation, or utilization that peaks in teams you didn’t target. That’s why the next step needs a pilot with a checkpoint tied to those numbers.

The ask: a pilot leaders can say yes to—and a checkpoint they can’t dodge

That checkpoint is your leverage: ask for a time-boxed pilot with a hard stop and a pre-set decision date. Propose 90–120 days in 2–3 high-risk groups, a capped budget, and a clear “what we’re changing” package (manager training plus faster access or a tightened EAP relaunch—not a menu of perks).

Put the checkpoint on the calendar now: “On [date], we review these two numbers.” Use your primary outcomes (avoidable exits in the pilot groups; unscheduled absence rate) with a baseline, a break-even threshold, and a minimum participation bar (manager completion rate, plus utilization in-range). Name the downside: if the data isn’t clean, the pilot becomes another feel-good cost with no proof.